OpenClaw NanoBot PicoClaw: Which AI Agent to Use

Compare OpenClaw, NanoBot, and PicoClaw — three open-source AI agents. Find the right one for your hardware, use case, and security needs in 2026.

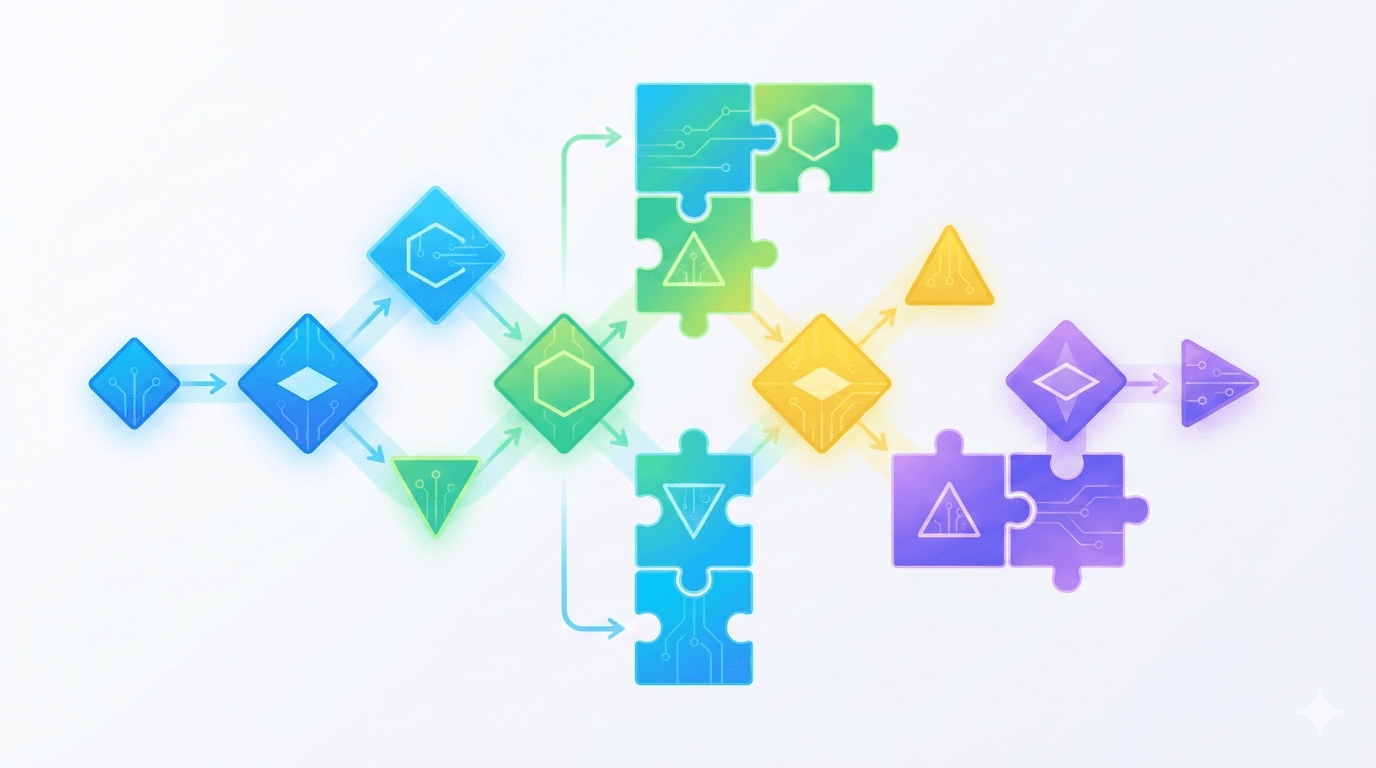

Meta prompting in 2026 is less about magic phrases and more about systems design: combining multiple prompts, models, and evaluation steps into a managed workflow that reduces hallucinations and improves accuracy. Instead of asking "What is the perfect prompt?", teams ask "What sequence of agents, tools, and checks produces the most reliable outcome for this use case?"

Definition: Meta prompting (2026)

Designing a system of prompts that includes routing, critique, cross-checking, and evaluation, often across multiple LLMs, to produce more reliable and bounded behavior.

Vendors like OpenAI increasingly ship capabilities (tool use, structured outputs, system instructions, evaluation tooling) that assume you will orchestrate several components rather than rely on a single raw chat completion. This article focuses on how to do that in real workflows, not just in theory. For reference, see OpenAI's platform resources: OpenAI.

A single model can go surprisingly far with careful prompting, but several hard limits show up:

At some point, more prompt tweaks yield diminishing returns. The shift is from better one-shot prompts to better systems: roles, stages, checks, and measurable evaluation.

The most effective orchestration patterns share one trait: they add explicit structure around model behavior. Here are four patterns teams actually ship.

Step-back prompting means asking the system to restate or decompose the task at a higher level before answering. In orchestration terms, you treat this as a specific stage:

This improves reliability by surfacing assumptions and missing data early. For complex workflows, persist the plan as structured JSON so downstream tools (or humans) can validate it.

Instead of trusting one model, run multiple in parallel and require some level of agreement:

This is especially useful for high-risk outputs (external-facing answers, compliance-sensitive content). The main trade-offs are cost and latency, so teams often trigger it only when a router flags elevated risk.

A router model chooses which downstream specialist to call:

Routing reduces hallucinations because each specialist is prompted and evaluated within a narrower domain.

Instead of relying on spot-checks, add evaluator steps that:

Evaluators can run synchronously (block unsafe outputs) or asynchronously (shadow mode) to feed monitoring and iteration.

| Pattern | Best For | Strengths | Trade-offs |

|---|---|---|---|

| Step-back prompting | Complex, multi-step tasks | Better task clarity, fewer off-target answers | Extra tokens, added latency |

| Cross-model agreement | High-risk decisions, external-facing answers | Fewer severe hallucinations, resilience | Higher cost, more latency, more infra |

| Router + specialists | Mixed workloads, varied intents | Efficiency, better domain accuracy | Requires routing quality and monitoring |

| Evaluation layers | Regulated domains, SLAs, QA pipelines | Continuous evaluation, clearer failure modes | More complexity, added infra cost |

Most robust systems combine at least two patterns in one workflow.

Use this practical process for most production use cases.

Define the target artifact and risk level

Be specific: "internal FAQ answer" vs. "customer-facing policy guidance". Higher risk justifies more orchestration and evaluation.

Start with a baseline single-model solution

Begin with one strong model plus retrieval (if needed). Measure baseline accuracy and failure modes first.

Map the workflow into stages

Typical stages: understand -> plan -> retrieve -> draft -> verify -> finalize. Decide which stages are handled by models vs. tools vs. humans.

Assign models and prompts per stage

Add routing and guardrails

Use routers for intent, risk, or cost. Add deterministic checks like schema validation and lightweight policy rules before and after LLM calls.

Instrument everything for evaluation

Log inputs, intermediate artifacts, model choices, scores, and final outputs. Version prompts and routing so you can run regression tests.

Iterate with offline plus online evaluation

Build a small golden dataset for offline runs, then roll out gradually and track metrics like escalation rate, user edits, and evaluator scores.

This reframes meta prompting as engineering discipline, not prompt wizardry.

You can build orchestration with custom code, but many teams use an orchestration layer plus an automation layer.

Agent frameworks and orchestration platforms

Provide abstractions for tool-calling, memory, multi-step planning, retries, and policies.

Workflow automation platforms

Useful for stitching model calls into business processes: triggers, webhooks, branching, CRM updates, and alerting. Two widely used options are n8n and Make.

Retrieval and vector search layers

Provide grounding by supplying relevant documents. Often separated from orchestration to keep the LLM workflow simpler and more testable.

Logging and evaluation tooling

Captures prompts, responses, metrics, and feedback, then runs evaluation jobs across historical data.

Separating "AI reasoning" from "business plumbing" makes it easier to swap models and iterate on prompts without breaking integrations.

Use this matrix to pick a sensible starting point.

| Use Case | Recommended Pattern | Best For | Tool Types |

|---|---|---|---|

| Customer support FAQ bot | Step-back prompting + evaluation | Fewer hallucinated answers, safer policy behavior | Orchestration layer, retrieval, evaluation |

| Internal knowledge assistant | Router + specialists + retrieval | Mixed intents and content types | Agent platform, vector search, logging |

| Code review or refactoring | Cross-model agreement + evaluator | Safer suggestions, fewer subtle mistakes | Specialists, evaluation, monitoring |

| Data analysis assistant | Planner + tool-calling + evaluator | Traceable reasoning and correctness | SQL tools, orchestration, eval dashboards |

| Regulated drafting (legal, finance) | Cross-model agreement + human-in-loop + strict evaluation | Minimizing critical failures | Orchestration, ticketing integration, eval |

If unsure, start with a single-model plus retrieval baseline, then add:

| Checklist Item | Status |

|---|---|

| Clear use case and risk level defined | ☐ |

| Baseline measured (single model, plus retrieval if needed) | ☐ |

| Orchestration patterns selected for risk and latency budget | ☐ |

| Prompts and roles documented per stage (router, planner, executor, evaluator) | ☐ |

| Logging for inputs, outputs, intermediate steps, and model choices | ☐ |

| Small golden dataset built for offline evaluation | ☐ |

| Automated eval runs set up with pass/fail thresholds | ☐ |

| Fallback strategy designed (human escalation, safe templates, or "I don't know") | ☐ |

| Gradual rollout with monitoring and alerting | ☐ |

Meta prompting and heavy orchestration are not always the right move:

A good heuristic: if you cannot justify each additional model call in terms of risk reduction or business value, do not add it.

Teams that treat prompts as one-off incantations eventually hit reliability ceilings: hallucinations, inconsistent tone, and policy drift. Meta prompting in 2026 reframes the job as building systems of prompts, models, and evaluators that can be monitored, tested, and improved like any production service.

Start with a simple baseline. Add orchestration only where it clearly improves accuracy or reduces risk. Most importantly, invest in evaluation early so reliability is measured, not guessed.

Classic prompt engineering focuses on crafting a single strong prompt for one model call. Meta prompting focuses on systems of prompts: routers, planners, executors, and evaluators, often across multiple model calls. It treats prompting, model selection, and evaluation as a coordinated workflow rather than isolated messages.

No. Orchestration helps when it adds structure that enforces grounding (retrieval), cross-checking (multiple candidates), or explicit evaluation. Chaining more calls without clear roles can compound errors. The value comes from disciplined patterns, not more agents.

Not necessarily. You can get strong gains using different model sizes or configurations from one provider. Multiple providers can add resilience (especially in cross-model agreement), but it increases integration and monitoring overhead.

Use offline plus online evaluation. Offline: run a golden dataset through the full workflow and score correctness, grounding, and safety. Online: track user edits, escalation rates, evaluator scores, and incidents. Regression testing is essential when prompts, routing, or models change.

Add one when failures are costly (legal, finance, medical-adjacent) or when you must enforce detailed policies. For low-risk use cases, simple heuristics plus spot-checking may be enough. If you commit to SLAs or external-facing experiences, evaluation becomes much more valuable.

Compare OpenClaw, NanoBot, and PicoClaw — three open-source AI agents. Find the right one for your hardware, use case, and security needs in 2026.

Best AI Agents for Beginners 2026 (No Coding Needed)

Best AI Agents 2026: What Works for Small Teams

The Best AI Tools to Run a One-Person Business in 2026 (No Staff, No Code)

Everyone's demoing AI agents. Here's what actually happens when marketers put them to work on real tasks - an honest look at allmates.ai and four alternatives.

Agentic AI Market Size 2026: The $139B Boom Explained

GPT-5.4 shipped March 5, 2026 with native computer use, scoring 75% on real desktop tasks vs. 72.4% human baseline. Most people will prompt it like a chatbot. Here is how to actually get results.

WordPress.com added write access for AI agents in March 2026. After two weeks testing it for editorial drafts, it saves time on the parts you'd expect — and falls apart where it matters most.

ARC-AGI-3 launched March 26, 2026. Every frontier model scored below 1%: Gemini 3.1 Pro Preview led at 0.37%, GPT-5.4 at 0.26%. Here’s what the interactive agentic benchmark reveals about current AI reasoning limits.

Newsquest runs up to 30 AI-drafted stories a day via 30 AI-assisted reporters. Reuters Institute: 67% of publishers haven't saved jobs from AI yet. Here's what the workflow actually looks like.

Z.AI's GLM-5.1 scored 58.4 on SWE-Bench Pro, edging GPT-5.4 and Claude Opus 4.6 by less than 1.1 points. The benchmark lead is real — the hardware requirement to run it locally is not consumer-grade.

AI agents are pitched as the thing that finally automates the grunt work of API integration. The evidence from controlled studies and production deployments tells a messier story — real gains on narrow tasks...